Apple Contractors Listened To Your Conversations With Siri

by Samra Anees

At this point, it’s common knowledge that voice assistants record snippets of speech when activated for both testing and development purposes. Recently, Google contractors leaked over 1000 snippets of private conversations that they didn’t know were being recorded around their Google Assistant. Now, Apple’s program for quality grading Siri has been suspended due to the fact that Siri was being activated at inappropriate times, resulting in the recording of several private and confidential conversations and moments.

Your conversations with Siri could potentially put your personal data at risk.

This problem is not unique to Siri and Apple, and speaks to growing privacy concerns about the use of digital voice assistants.

Apple Contractors Have Access to a Bank of Private Conversations

Just as with Google, Amazon, and other voice assistants, Apple passes on a portion of Siri recordings to a team of contractors that grades the interactions between users and Siri. This is to help Siri understand interactions with users better.

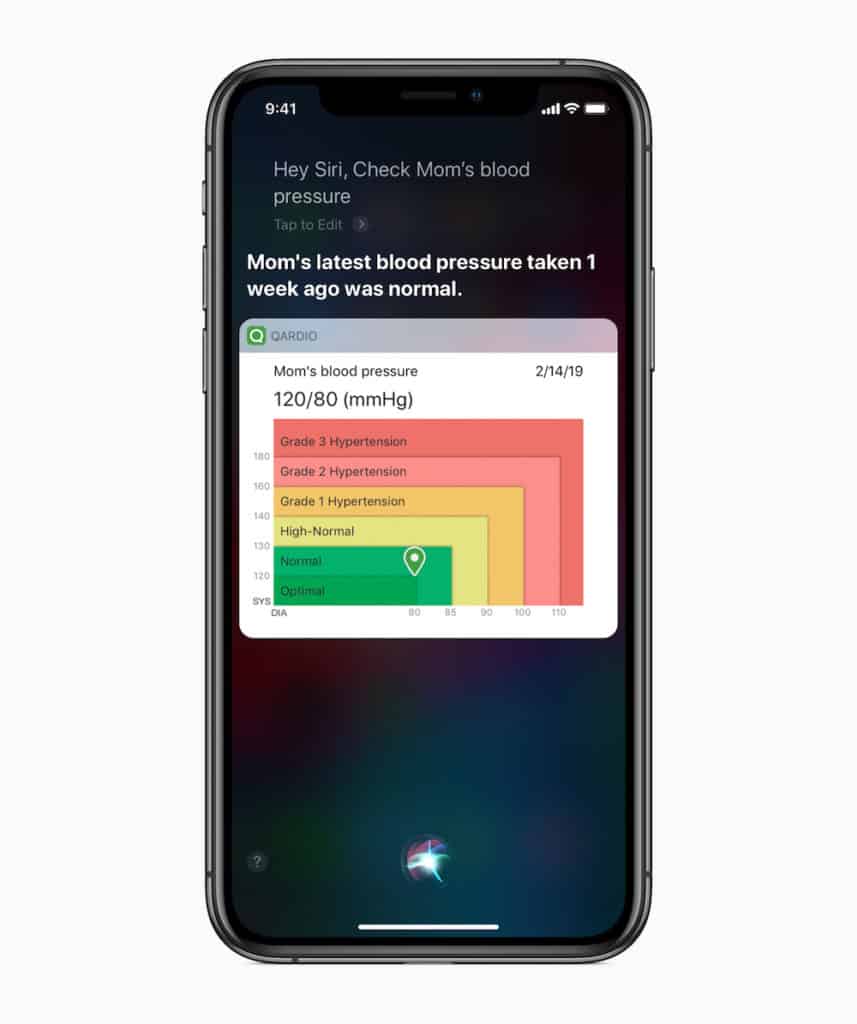

Siri is activated when someone says “hey Siri,” or if an Apple Watch has been raised and detects that someone is talking. While this can be convenient, it’s also a bit reckless, considering how many times a person lifts their hand in a day and isn’t intending to talk to Siri. Contractors say that the Apple Watch and HomePod are the most common sources of accidental recordings.

However, Apple doesn’t explicitly disclose that it takes these recordings or that the recordings are listened to by humans. While the recordings are decoupled from the user, many times, there is sensitive information in the recordings like names, addresses, business deals, and medical records, that can easily be used to re-identify the person. The contractors say that these accidental recordings were only to be reported as “technical problems” but there were no structured procedures that were intended to handle them.

The main issues here are that Apple didn’t disclose its Siri-scoring practices, and the fact that there were a large number of contractors who had access to potentially sensitive information about Apple users.

How Much Do People Care?

While even the people who worked on analyzing the Siri recordings for Apple say that it’s unsettling how much data is available to the workers, not everyone is as concerned about the situation. The logic behind this complacency is that some people believe that even though someone may have access to some of their private conversations, they’re not concerned that those conversations or information could be used against them.

While this is likely the case for many people and it’s easy to turn a blind eye when nothing significant is at stake, this is more about preventative measures and setting boundaries than it is about what information you currently may or may not be concerned about coming out.

Bottom Line

With so much voice technology so readily available to consumers today, the important takeaway is that if you own voice technology devices, always expect that there is a good chance your conversations are not private, regardless of whether you’re speaking to your voice assistant or not. Amazon Alexa, Google Assistant, and now Apple’s Siri have shown us that voice technology listens to us even when we’re not speaking to it. While Amazon, Google, and Apple are all taking measures to right their privacy-related wrongs, it’s better to be aware of the capabilities of your voice assistant—whether you use Alexa, Google Assistant, or Siri—than to trust that the technology always works exactly how it’s meant to.

Have a Comment on this?