Apple M5 Pro MacBook powers Edge AI

By Jim Lundy

Apple M5 Pro MacBook powers Edge AI

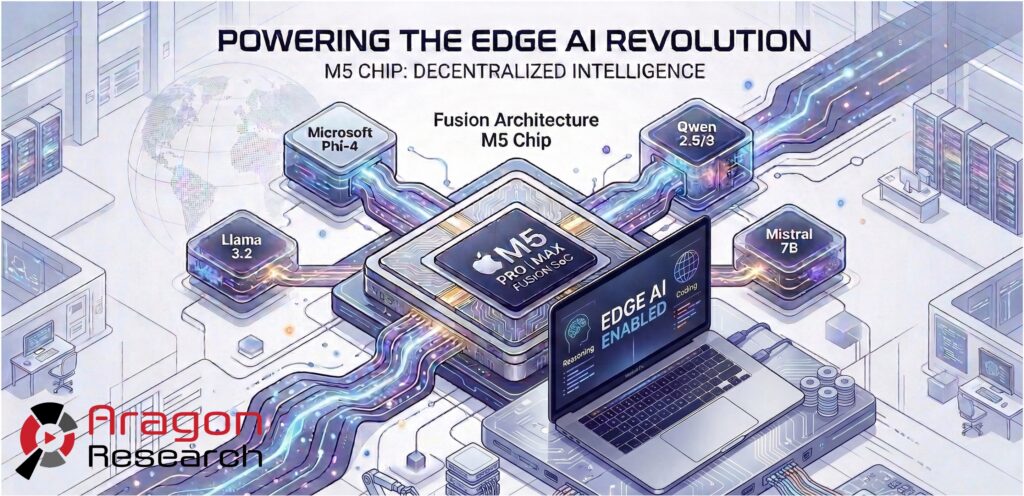

Apple just announced the new MacBook Pro featuring the M5 Pro and M5 Max chips, signaling a decisive shift in its silicon strategy toward on-device artificial intelligence. This update introduces a new Fusion Architecture that bonds two 3nm dies into a single system on a chip to scale performance while maintaining low-latency unified memory access. The hardware specifications are formidable, with the M5 Max supporting up to 128GB of unified memory and 614GB/s of bandwidth. This blog overviews the Apple M5 MacBook Pro news and offers our analysis.

Why did Apple announce the M5 MacBook Pro?

The primary driver for this announcement is the requirement for massive localized compute power to handle generative AI workloads. Apple is moving beyond standard CPU and GPU increments by embedding a dedicated Neural Accelerator into every single GPU core of the M5 family. This architectural change allows the M5 Max to deliver up to 4x the peak GPU compute for AI compared to the previous generation.

By doubling the starting storage and offering memory configurations that exceed typical laptop standards, Apple is targeting a specific class of professional who needs to run complex models without relying on cloud-based APIs. The move ensures that as software ecosystems transition to AI-integrated workflows, Apple hardware remains the baseline for performance.

Analysis

This release confirms that Apple is no longer just building a faster computer; it is building a dedicated local inference engine. While the industry has historically focused on cloud-resident Large Language Models, the market is currently pivoting toward Small Language Models and quantized versions of larger models like Llama 3.2 or Phi-4. These models require high memory bandwidth and significant VRAM to function at interactive speeds. Apple’s decision to offer 128GB of unified memory on a mobile platform effectively removes the “memory wall” that hampers most Windows-based AI PCs.

The significance of the Fusion Architecture cannot be overstated. It suggests that Apple is hitting the physical limits of single-die 3nm density and is adopting a modular approach to maintain its performance lead. This allows them to scale the Neural Engine and GPU clusters more aggressively than competitors who are still tethered to traditional motherboard-based memory architectures.

For the broader market, this forces a reckoning. If Apple can demonstrate that a 70B parameter model can run at over 100 tokens per second on a laptop with a 90W power draw, the value proposition of expensive, high-latency cloud inference begins to erode for many enterprise use cases. This is a defensive and offensive maneuver to ensure that the next wave of decentralized AI development happens within the macOS ecosystem.

What should enterprises do?

Enterprises must evaluate the M5 MacBook Pro as a strategic investment in data sovereignty and cost control. IT leaders should assess the potential savings of shifting AI workloads from pay-per-token cloud models to local execution on pro-tier hardware. If your organization handles sensitive proprietary data or source code, the ability to run capable models entirely offline provides a level of security that cloud wrappers cannot match. Organizations should begin testing their custom internal models on this hardware to determine if the performance gains in prompt processing and time-to-first-token justify an accelerated refresh cycle for their technical staff.

Bottom Line

The M5 MacBook Pro represents Apple’s commitment to dominating the hardware layer of the Edge AI ecosystem. By optimizing silicon for high-speed local inference, they are providing a turnkey solution for the next wave of decentralized intelligence. Enterprises should view these devices as essential infrastructure for developers and data scientists who require high-performance AI tools in a mobile format. The era of the “AI PC” has moved past marketing hype into a phase of tangible, high-bandwidth hardware that can legitimately replace cloud dependencies for a wide array of professional tasks.

Have a Comment on this?