Google Dual TPUs Prepare for Inference Agents

By Jim Lundy

Google Dual TPUs Prepare for Inference Agents

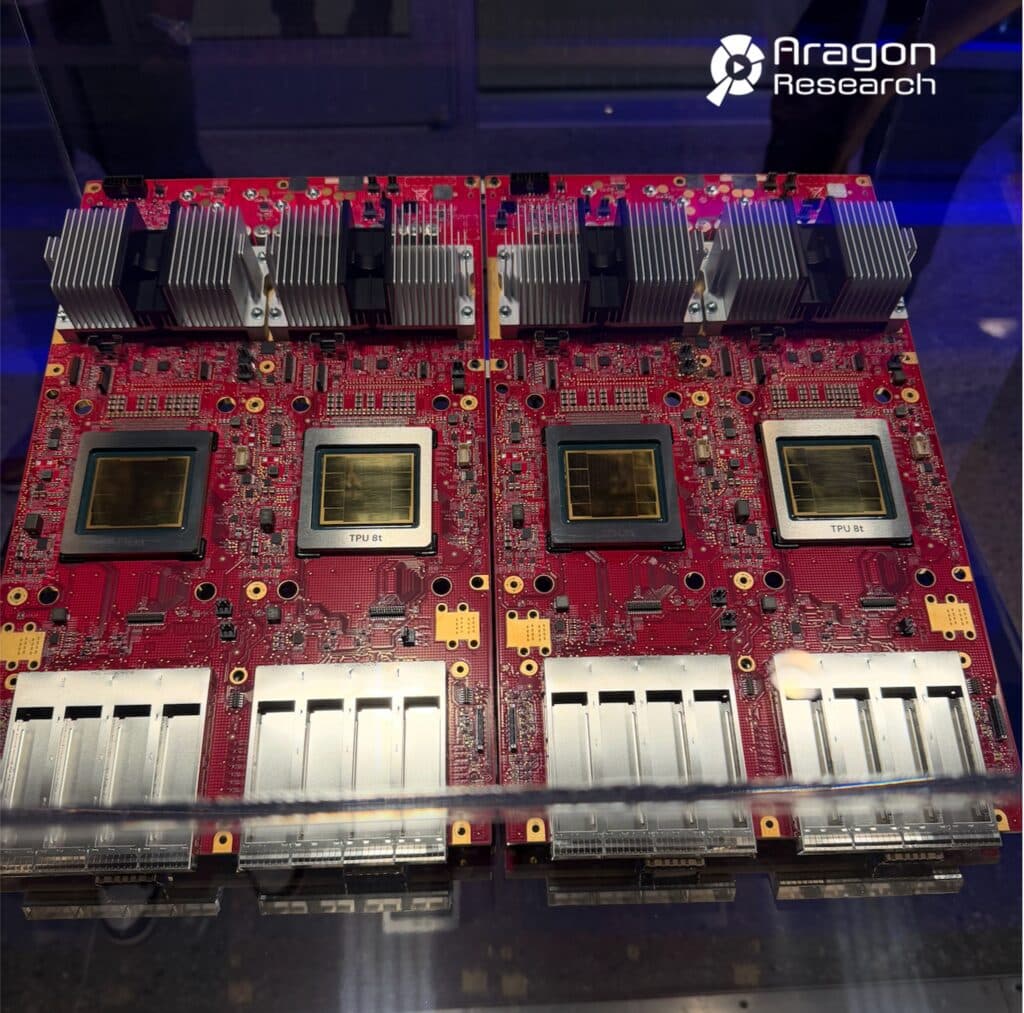

The announcement of Google’s eighth-generation Tensor Processing Units (TPUs) represents a pivotal shift in how hyperscalers approach the underlying hardware necessary for the next phase of generative AI. By diverging into two specialized architectures—the TPU 8t for training and the TPU 8i for inference—Google is moving beyond general-purpose acceleration to address the specific bottleneck of the agentic era. This blog overviews the Google Cloud TPU v8 announcement and offers our analysis.

Why Google Announced TPU 8t and TPU 8i architectures

The primary driver for this dual-chip release is the evolving nature of AI workloads which are shifting from simple prompt-response interactions to complex, multi-step autonomous agents. Google recognized that the hardware requirements for training trillion-parameter models are fundamentally different from the low-latency requirements of running “swarms” of inference agents in real time. The TPU 8t is built as a training powerhouse, scaling to 9,600 chips in a single superpod to slash development cycles from months to weeks.

Conversely, the TPU 8i is specialized as a reasoning engine designed to break the “memory wall” that often plagues inference performance. By doubling the physical CPU hosts per server and integrating their custom Axion Arm-based CPUs, Google is optimizing the entire stack rather than just the accelerator. This move allows Google to maintain the massive scale required for its own services, like AI Overviews in Search, while offering a commercially viable alternative to third-party silicon for enterprise cloud customers.

Analysis

This announcement is a significant indicator that the era of “one-size-fits-all” AI hardware is coming to an end for the world’s largest cloud providers. While Google remains a partner to other silicon providers, the sheer performance-per-dollar gains of 80% found in the TPU 8i suggest a strategic move toward total infrastructure independence. By controlling the silicon, the liquid cooling, and the interconnect fabrics, Google is effectively insulating its profit margins from the soaring costs of the external GPU market.

The most critical impact of this release is not the raw speed, but the systematic elimination of dependency on the broader merchant silicon supply chain. For years, the industry has been throttled by the availability of specific high-end GPUs. Google’s infrastructure-first approach allows them to bypass these market constraints, providing them with a growing edge over competitors who must wait in line for the same third-party chips. This vertical integration means Google can deploy agentic capabilities at a cost structure that competitors reliant on external vendors may find impossible to match.

Furthermore, the introduction of the Virgo Network and Boardfly topology addresses the “goodput” problem—the measure of actual productive compute time versus time lost to system failures or restarts. By targeting 97% goodput, Google is tackling the operational reality of AI: at frontier scale, even a 1% gain in efficiency translates into millions of dollars in saved energy and time. This level of optimization suggests that Google is no longer just building chips; they are building a proprietary, closed-loop ecosystem that redefines the price floor for high-performance AI services.

Strategic Recommendations for Enterprises

Enterprises should evaluate these new TPU offerings as a primary consideration for high-scale inference and long-term model training projects. If your organization is currently facing high latency or prohibitive costs with existing GPU-based inference, the TPU 8i provides a compelling reason to benchmark your workloads within the Google Cloud environment. It is no longer enough to look at raw TFLOPS; enterprises must look at the performance-per-watt and performance-per-dollar metrics that these specialized chips provide.

We recommend that technology leaders understand the specific software requirements, such as JAX or PyTorch, needed to leverage these chips effectively. While Google offers “bare metal” access to reduce virtualization overhead, the transition to custom silicon requires a disciplined approach to software stack compatibility. Consider these TPUs as a strategic tool to lower your total cost of ownership for AI-native applications, especially those involving autonomous agents and real-time reasoning.

Bottom Line

Google’s eighth-generation TPUs represent a maturation of the AI market where specialized silicon replaces general-purpose acceleration to drive down costs and drive up efficiency. By splitting their architecture into training and inference specialists, Google is positioning itself to lead the agentic era through a vertically integrated stack that its competitors cannot easily replicate. Enterprises should monitor these developments closely and consider moving latency-sensitive workloads to these new architectures to gain a competitive advantage in cost and performance.

Have a Comment on this?