Are Voice Biometrics Ready for Primetime Authentication?

by Adrian Bowles, PhD

I was asked recently whether synthetic speech poses a danger in a world where voice is increasingly used to authenticate identity.

First, we need to be careful with terminology. Synthetic speech is not inherently bad. It simply refers to a process of generating natural-sounding speech like a human voice. Typically, synthetic speech applications allow a user to specify a particular gender with a regional accent, or they may attempt to sound like one individual. (Google WaveNet does this well.) The benign uses include making interaction with computers more natural and convenient for customers. There are questions about the ethics of using synthetic speech in applications when the user may not know that they are conversing with an application—like Google Duplex—but that is a different issue from perpetrating fraud using synthetic speech.

Now, let’s separate voice from speech. When humans learn to recognize the voice of a friend or loved one, or business associate, they learn in context. The human voice has enough physical attributes that can be captured in digital form to identify individuals, similar to fingerprints.

Applications can be trained to distinguish a user’s voiceprint, much like a fingerprint.

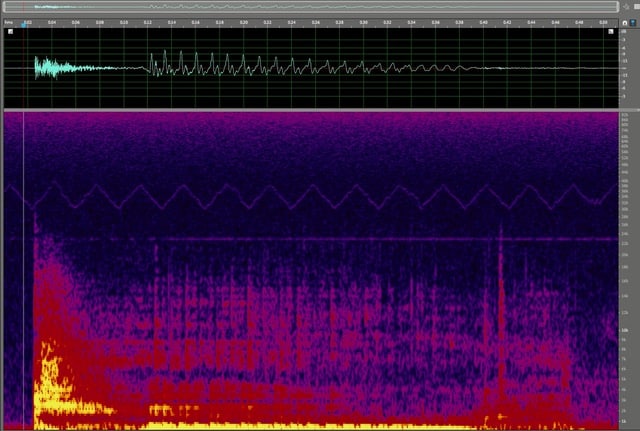

Above is the spectral frequency display—a view of a digital audio recording—of my voice speaking a common three-letter word. With enough training data—listening to recordings of me over time, or having me speak a specific phrase like “this is my banking password”—an application could learn how I speak well enough to recognize my voice by comparing my input to a stored profile with my digital voiceprint. I may lower my voice or speak quickly but I can’t change the parts of the digital fingerprint that are based on quantitative relationships, for example between frequencies during normal speech.

A lot of research has led to the discovery of patterns that can be used to detect stress, and emotions ranging from anger to joy. That is useful, but it doesn’t change the fundamental distinctiveness of the audio signal as an individual’s voice. We can all tell when our friends are angry by the “tone of their voice,” but the person speaking is still generally recognizable.

A model of an individual’s voice obtained by analyzing samples of actual speech should include a representation of the sounds (the physical components), the way the person speaks (behavior, or speech patterns with pauses, cadence, phrasing, vocabulary, etc.) and perhaps, passively obtained behavioral data such as how they hold their telephone. For example, the angle of the phone and which ear is used can be determined by sensors on the phone.

A Look at Fraud: Intentional Deception of Voice Authentication Technology

As a rule:

Every system designed to authenticate a user to protect real or intellectual property, including data, will be attacked for sport or profit.

Using synthesized speech to impersonate an individual in order to thwart authentication—fraud—requires an understanding of the system you’re trying to fool and construction of a model of the user’s voice that includes all of the attributes the authentication system monitors. The sound of a voice without the user’s speech pattern should be useless in trying to fool a good security system.

As the value of access increases, fraudsters are more motivated to invest in technology to successfully impersonate an authorized user. Identifying patterns that more completely model an individual’s voice and speech is an ideal application for some deep learning technologies to identify patterns that a human might not detect, but that may be used by the authentication algorithm. An authentication system that uses a voiceprint captured with state-of-the-art voice biometrics is still vulnerable to attack, although it is significantly more secure than one that relies solely on passwords (which have a finite number of possibilities, vulnerable to a brute force attack).

What Can Call Centers and Potential Victims Do to Protect Against Synthetic Speech Fraud?

As the same technologies that enable the creation of voiceprints (deep learning, audio and behavioral analytics, etc.) are also available to those who seek to break the authentication systems, the best solution is to use a combination of voiceprints and additional data that will be difficult for the fraudster to obtain.

Multi-factor authentication for a voice-first access system should include some non-voice components, such as an IP address from a registered device, or behavioral attributes.

Leading edge solutions today also maintain a database of voiceprints from known fraudsters, similar to directories of fraudulent websites.

For individuals who use voice authentication solutions, it is important to recognize that the weakest link is often a careless user. Insist on multi-factor authentication for any application that has access to your sensitive data. If your service provider uses a catchphrase that you are required to speak to gain access, never utter that phrase outside the application. High-quality audio recorders and digital audio editors are inexpensive, and it gets easier every day to create a realistic audio impersonation of any individual, anywhere.

Bottom Line

To answer my own question, yes, voice biometrics as a component of an authentication process are ready for primetime. As the only line of defense between you and the potential loss of your identity and assets? I’m not willing to risk it.

Have a Comment on this?